SEO testing involves making controlled changes to your website and measuring their impact on search rankings and organic traffic. Rather than guessing which optimisations will work, SEO testing allows you to experiment with elements like title tags, content structure, meta descriptions, and internal links to determine what actually improves your SEO performance. This data-driven approach removes uncertainty from your strategy.

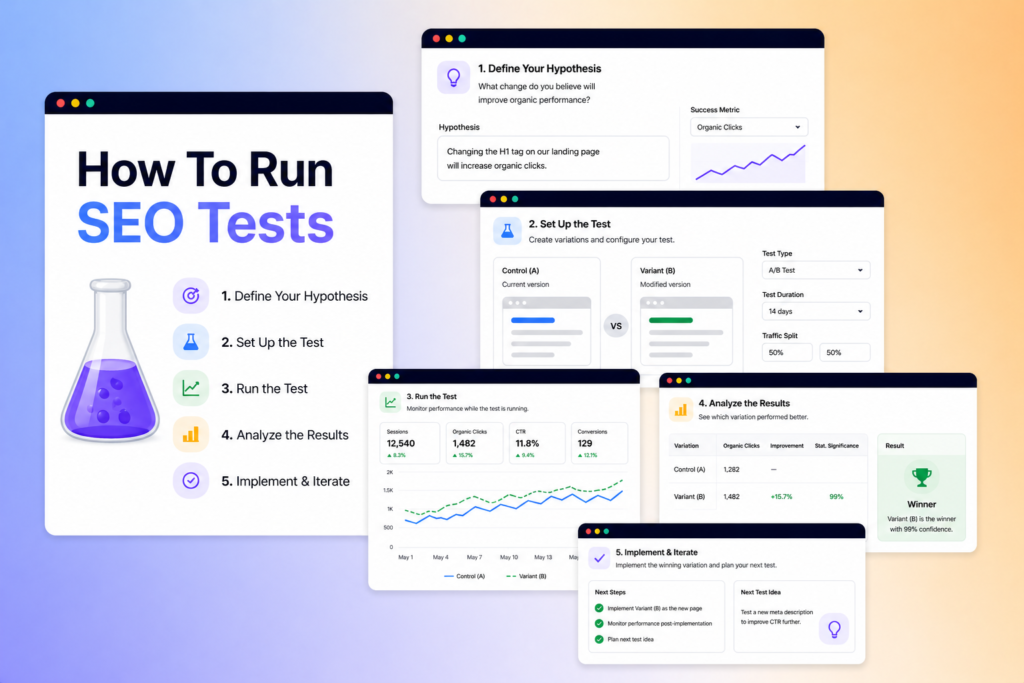

To conduct an SEO test, you identify a hypothesis, select a control and test group of similar pages, implement changes to the test group, and measure the results against SEO metrics like rankings, clicks, and impressions over a defined period. The process requires statistical rigor to ensure any changes you observe are genuine improvements rather than normal fluctuations.

Testing your SEO strategies before full implementation helps you avoid costly mistakes and scale only the tactics that deliver measurable results. This guide walks you through the complete testing framework, from designing valid experiments to analysing outcomes and applying winning changes across your site.

Key Takeaways

- SEO testing uses controlled experiments to validate which optimisations genuinely improve your organic traffic and rankings

- Proper test design requires comparable control and test groups, statistical significance, and sufficient time to measure accurate results

- Successful SEO tests focus on measurable hypotheses and scale proven changes across similar pages for maximum impact

Fundamentals of SEO Testing

SEO testing requires a structured approach where you make controlled changes to your website and measure their impact on rankings and traffic. Your success depends on selecting the right variables, establishing clear hypotheses, and ensuring your results are statistically valid.

Understanding SEO Testing and Experiments

SEO testing is the process of testing and measuring changes to your website to improve its traffic or rankings. You create controlled experiments where you modify specific elements of your pages and track how search engines respond to these changes.

Your SEO experiment typically involves two groups: a control group that remains unchanged and a variant group where you implement your modifications. This allows you to isolate the impact of your changes from other factors affecting your site’s performance.

The testing process differs from traditional marketing tests because search engines don’t provide immediate feedback. You need to wait for crawling, indexing, and ranking updates before you can measure results. This makes patience and proper data collection essential for accurate insights.

Selecting Test Variables and Metrics

Your test variables are the specific elements you modify during an SEO experiment. Common variables include title tags, meta descriptions, content length, internal linking structure, heading tags, and schema markup.

You should focus on variables that directly influence how search engines understand and rank your content. Choose one variable at a time to maintain clarity in your results. Testing multiple changes simultaneously makes it impossible to determine which modification drove your performance changes.

Your SEO metrics and KPIs must align with your test objectives. Track organic traffic, click-through rates, impressions, average position, and conversion rates from organic search. Performance data should be collected over a sufficient period to account for search engine update cycles and seasonal variations.

Developing a Test Hypothesis

Your hypothesis states what you expect to happen when you make a specific change to your test pages. A proper hypothesis includes the variable you’re testing, the direction of expected change, and the metric you’ll measure.

Write your hypothesis in a clear format: “If I [make this change], then [this metric] will [increase/decrease] because [logical reasoning]”. For example, “If I increase content length from 500 to 1,500 words, then organic traffic will increase because longer content provides more comprehensive coverage of user queries.”

Your reasoning should be based on SEO principles and search engine behaviour patterns. Avoid assumptions without logical foundations, as they lead to inconclusive tests and wasted resources.

The Importance of Statistical Significance

Statistical significance determines whether your test results reflect genuine improvements or random fluctuations. You cannot declare a test successful simply because you observe positive changes during the testing period.

Your results need sufficient data volume and time duration to reach statistical validity. Small sample sizes produce unreliable conclusions that may lead you to implement changes that don’t actually improve performance. Most SEO tests require at least 2-4 weeks of data collection, though complex experiments may need longer periods.

Calculate confidence levels to understand the probability that your results aren’t due to chance. Industry standards typically require 95% confidence before you implement changes across your entire site. Without proper statistical validation, you risk making decisions based on noise rather than signal in your performance data.

Types of SEO Tests and Experiment Design

Different testing methodologies suit different scenarios, from comparing individual page changes to measuring template-wide updates across thousands of URLs. Understanding how split testing differs from time-based approaches and when to apply serial versus multivariate methods helps you select the right framework for your specific goals.

SEO A/B Testing vs Split Testing

SEO A/B testing involves creating two versions of pages and splitting search engine traffic between them to measure which performs better. You assign pages to either a control group that keeps the original version or a variant group that receives your changes.

Split testing in SEO differs from traditional user-focused A/B testing because you cannot control which version a search engine crawls. Instead, you create matched groups of similar pages and apply changes to one group whilst the other remains unchanged. This approach works particularly well for sites with many similar pages, such as category pages or product listings.

The key challenge is ensuring your test pages are truly comparable. You need pages with similar historical performance, traffic levels, and ranking positions. Without proper matching, external factors like seasonality or algorithm updates can skew your results and lead to incorrect conclusions about what actually drove any changes in performance.

Before-and-After and Time-Based Approaches

Before-and-after testing measures performance metrics before you implement a change and compares them to the same metrics afterwards. This method suits situations where you lack enough similar pages to create proper control and test groups.

Time-based tests require careful attention to test duration. You need sufficient data to account for normal traffic fluctuations, typically running experiments for at least two to four weeks. Shorter periods risk confusing random variation with genuine impact from your changes.

The main weakness of before-and-after testing is the inability to separate your changes from external factors. Algorithm updates, seasonal trends, or competitor actions occurring during your test period can all affect results. You should monitor industry-wide ranking changes and Google algorithm update announcements to identify potential confounding variables.

Serial, Multivariate, and Group Page Testing

Serial testing examines one variable at a time across your test pages. You might test title tag changes first, measure results, then test meta description modifications separately. This approach provides clear attribution but requires more time to test multiple elements.

Multivariate testing changes multiple test variables simultaneously to understand how different elements interact. You might alter title tags, headings, and internal links together. This method accelerates testing but makes isolating individual element impact more difficult.

Group tests apply changes to categories of similar pages rather than individual URLs. You might update all product pages in one category whilst leaving another category unchanged. This method suits template-level changes and provides faster results through aggregate data from multiple pages sharing similar characteristics.

Implementing and Measuring SEO Tests

Successful SEO testing requires selecting the right pages, monitoring meaningful metrics, and accounting for external factors that could skew your results. Proper implementation and measurement separate actionable insights from misleading data.

Identifying High-Impact Pages to Test

Focus your testing efforts on pages where improvements will generate the most significant impact. High-traffic pages should be your primary target because changes here affect larger volumes of impressions and clicks, making it easier to detect meaningful differences in performance data.

Product pages and category pages often represent the best testing opportunities. These pages typically receive substantial traffic and directly influence revenue. You can identify these opportunities through Google Search Console by filtering for pages with high impressions but lower-than-expected click-through rate.

Pages ranking in positions 4-10 deserve special attention. They receive enough visibility to generate measurable traffic but have clear room for improvement. You can use Google Analytics alongside Search Console to identify pages with good average position but disappointing engagement metrics like high bounce rate or low time on page.

Avoid testing low-traffic pages initially, as you’ll need to wait months before collecting enough data to reach statistical significance. Group similar low-traffic pages together if you want to test changes across less-visited sections of your site.

Choosing and Tracking Relevant KPIs

Select metrics that directly reflect your test hypothesis. If you’re testing title tag changes, focus on click-through rate and impressions in Google Search Console rather than conversion metrics. For content modifications, track search rankings, organic clicks, and engagement signals.

Essential metrics to monitor include:

- Average position for tracking ranking changes

- Impressions to measure search visibility

- Clicks for actual traffic volume

- CTR to assess how compelling your listings appear

- Bounce rate and time on page for engagement quality

Track both primary and secondary metrics. Your primary metric should align directly with your test goal, whilst secondary metrics help you understand broader impact. Testing meta descriptions might improve CTR (primary) but you should also monitor whether the quality of traffic changes (secondary).

Set baseline measurements before implementing changes. Record at least two weeks of stable performance data to establish normal fluctuation ranges for your chosen KPIs.

Collecting and Analysing Performance Data

Extract search performance data from Google Search Console regularly throughout your test period. Download reports at consistent intervals to track how impressions, clicks, and average position evolve over time. Compare test pages against control pages or pre-test benchmarks to isolate the effect of your changes.

Look for consistent trends rather than single-day spikes. Search rankings naturally fluctuate, so you need multiple data points to confirm whether changes represent genuine improvements. Calculate percentage changes rather than absolute numbers to better understand relative impact.

Cross-reference Search Console data with Google Analytics to build a complete picture. Search Console shows you how pages perform in search results, whilst Analytics reveals what happens after visitors arrive. A test might improve CTR but increase bounce rate, indicating that your new titles create misleading expectations.

Use comparison date ranges to evaluate performance against the same period from previous weeks or months. This approach helps you distinguish test effects from normal growth patterns or external factors affecting your site.

Dealing with Seasonality and Confounding Factors

Account for seasonal traffic patterns when planning test duration and interpreting results. Retail sites experience predictable fluctuations around holidays, whilst B2B sites often see drops during summer months and year-end. These patterns can mask or exaggerate your test results if ignored.

Extend your test duration to cover complete seasonal cycles when possible. A four-week minimum allows you to capture weekly patterns, but tests running through seasonal peaks may need eight to twelve weeks for accurate assessment. Compare your test period against the same timeframe from the previous year to contextualise changes.

Common confounding factors include:

- Algorithm updates affecting search rankings

- Competitor changes in search results

- Site-wide technical issues impacting performance

- Marketing campaigns driving additional traffic

- External events influencing search behaviour

Monitor your control pages or similar untested pages to detect site-wide changes. If both test and control pages show similar movement, external factors rather than your test likely caused the change. Document any significant events during your test period, including technical issues, algorithm updates, or promotional activities that might influence your metrics.

The Best Tools To Use

Selecting the right tools makes SEO testing more efficient and data-driven. You’ll need platforms that can track rankings, monitor technical changes, and measure the impact of your experiments.

Semrush stands out as a comprehensive solution for running controlled SEO tests. It provides position tracking, site auditing, and competitive analysis features that help you measure changes accurately. You can monitor keyword rankings before and after implementing tests.

For technical audits, Screaming Frog excels at crawling websites to identify issues like broken links, duplicate content, and metadata problems. This tool is particularly valuable when testing technical SEO changes across large sites.

Google Search Console remains essential for any testing process. You access real performance data directly from Google, including impressions, clicks, and average positions. It shows how search engines actually respond to your changes.

SEO testing platforms enable you to run controlled experiments with statistical significance. These specialised tools help you separate correlation from causation by comparing test pages against control groups.

ChatGPT and AI tools have become useful for SEO workflows in 2026, particularly for generating test hypotheses and analysing patterns in your data. However, you should verify AI suggestions with actual testing.

DebugBear monitors Core Web Vitals and technical performance metrics continuously. You’ll spot performance regressions quickly when testing site speed optimisations or infrastructure changes.

Most professionals combine multiple tools rather than relying on a single platform. You might use Screaming Frog for audits, Search Console for validation, and a dedicated testing platform for controlled experiments.

Practical Test Opportunities and Best Practices

Strategic SEO testing requires focusing on high-impact elements like title tags and meta descriptions, content architecture, technical implementations, and measurement accuracy. Testing these components systematically helps you understand how changes affect your SERP performance and user experience whilst providing data to inform future optimisations.

Improving Title Tags and Meta Descriptions

Your title tags and meta descriptions serve as the first point of contact between your content and users in search results. When testing title tags and content, focus on specific variables such as keyword placement, character length, and the inclusion of power words or numbers.

Test one variable at a time to isolate what drives improvements in click-through rates. For example, you might test whether positioning your primary keyword at the beginning of the title tag versus the middle affects rankings and engagement signals. Similarly, experiment with meta description length—whilst Google may truncate descriptions beyond 155-160 characters, some tests show that longer descriptions can still perform well depending on search intent.

Create a control group of pages that remain unchanged whilst applying modifications to a test group. Track metrics including impressions, clicks, and average position through your SEO testing tools over at least four weeks to account for search engine algorithms processing your changes.

Testing Content Structure and Internal Linking

Content structure directly impacts how search engines understand your pages and how users consume your information. Test variables like content length, heading hierarchy, and the implementation of elements such as table of contents or featured snippet optimisations.

When evaluating content updates, consider testing the addition of long-form content versus shorter, more focused pieces based on search intent. You might discover that certain topics rank better with comprehensive 2,000-word guides, whilst others perform optimally at 800 words. Conducting structured SEO tests helps identify these patterns through measurable data.

Your internal linking strategy offers substantial testing opportunities. Experiment with the number of internal links per page, anchor text variations, and whether linking to cornerstone content improves rankings for both the source and destination pages. Test adding contextual links within body content versus navigation-based links to determine which approach strengthens topical authority.

Schema Markup, Backlinks, and Technical Variables

Schema markup implementation provides rich results that can significantly improve visibility and click-through rates. Test different schema types relevant to your content, such as FAQ schema, HowTo schema, or Article schema, to determine which generates the most engagement signals.

When testing backlinks, focus on variables you can control or influence. This includes the anchor text diversity in guest posts, the placement of links within content (early versus late in articles), and whether links from topically relevant sites provide more ranking benefit than those from high-authority but unrelated domains.

Technical variables warrant careful testing, particularly during a site redesign or when implementing AI-generated content from LLMs. Test page speed improvements, mobile responsiveness changes, or Core Web Vitals optimisations individually. For sites incorporating AI-generated content, compare performance metrics against human-written content to ensure quality standards align with search engine expectations.

| Test Category | Key Variables | Measurement Period |

|---|---|---|

| Schema Markup | Schema type, placement | 4-6 weeks |

| Backlinks | Anchor text, source relevance | 8-12 weeks |

| Technical SEO | Page speed, Core Web Vitals | 4-8 weeks |

Ensuring Accurate Indexing and Reporting Outcomes

Proper indexing ensures your tests actually reach search engines and affect rankings. Before declaring test results, verify that search engines have crawled and indexed your changes. Use Google Search Console to check indexing status and submit updated pages for re-crawling when necessary.

Your SEO testing process must include robust documentation of all changes, dates, and observed outcomes. Record baseline metrics before implementing changes, then track performance at regular intervals. Note any external factors—such as algorithm updates, seasonal trends, or competitor actions—that might influence results.

Establish clear success criteria before testing begins. Define what constitutes a meaningful improvement, whether that’s a 10% increase in organic traffic, improved rankings for target keywords, or enhanced engagement metrics like time on page and bounce rate. Statistical significance matters; avoid drawing conclusions from small sample sizes or short timeframes that don’t account for normal fluctuations in search performance.

Frequently Asked Questions

SEO testing raises specific questions about search engine mechanics, measurement approaches, and implementation methods. These answers address the practical concerns that arise when developing and executing test strategies.

How do you set up an SEO test plan for a new website?

Start by establishing baseline measurements before making any changes. Record your current rankings for target keywords, organic traffic levels, click-through rates, and conversion data so you can measure the impact of future modifications.

Define specific hypotheses you want to test rather than making random changes. For example, you might hypothesise that adding schema markup to product pages will increase click-through rates by 15% within 30 days.

Select a testing methodology appropriate to your site’s structure. If you have enough similar pages, use split testing where some pages receive changes whilst control pages remain unchanged. For sites with fewer pages, implement changes sequentially and monitor performance against historical data and expected seasonal trends.

Document every change with the date, affected pages, and expected outcome. This creates an audit trail that helps you attribute performance shifts to specific modifications rather than external factors like algorithm updates or seasonal demand changes.

Which SEO metrics should you track to judge whether changes are working?

Organic traffic volume shows whether more users are finding your pages through search engines. Track this at both the site level and for specific pages or groups affected by your test to isolate the impact of changes.

Rankings for target keywords indicate your visibility in search results. Monitor position changes for the specific queries you’re targeting, but remember that rankings alone don’t guarantee traffic or conversions.

Click-through rate from search results reveals how compelling your titles and meta descriptions are to searchers. Even a small improvement in CTR can significantly increase traffic without ranking changes.

Conversion rate and goal completions measure whether the traffic you attract actually fulfils your business objectives. An SEO change that increases traffic but reduces conversions may harm overall performance.

Page load speed and Core Web Vitals affect both user experience and rankings. Track these technical metrics because they influence how search engines evaluate your pages.

How can you run A/B or split tests for SEO without risking rankings?

Use server-side testing rather than client-side JavaScript implementations that can confuse search engine crawlers. Ensure that both Googlebot and users see the same content to avoid cloaking penalties.

Apply a consistent canonical URL structure so you don’t create duplicate content issues. If you’re testing different versions of a page, make sure search engines understand which version should be indexed.

Test on a subset of similar pages rather than your entire site. For example, if you’re testing a new title tag format, apply it to half of your product pages whilst keeping the other half as controls. This approach, known as controlled SEO testing, removes guesswork and provides proof of what works.

Avoid testing for too short a period. Search engines need time to recrawl pages, process changes, and adjust rankings. Most SEO tests require at least 2-4 weeks to produce reliable data, though competitive keywords may need longer.

Monitor your test groups for statistically significant differences. Small sample sizes or short timeframes can produce misleading results that appear positive but don’t represent real improvements.

Need A Hand?

If you want some help from an experienced SEO consultant in running SEO tests for your business, please do get in touch!